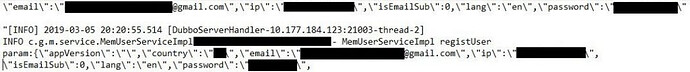

It looks like the database is unsecured and unencrypted.

At least it was a white hat attack ![]()

on March 1st, 2019, such firewalls were mistakenly taken down by one of our security team members for reasons still being under investigation.

That’s actually reasonable; inexperienced and undertrained caretakers can easily make a stupid mistake like that. Doesn’t excuse it, but that’s at least possible. I recall one time being asked to audit the security infrastructure at an unnamed company. They had the typical firewall-in-front-of-internal-switches setup. Lovely design diagrams. Was shown the data cabinet where these devices were happily living together. Pretty lights and neatly run cables.

But when I looked at the actual configurations, it turned out that the internal switches were actually in front of the external firewalls, essentially protecting them… Zero data security. (For those that would understand what I mean… the core switches were public facing; all traffic came to them first via a publicly addressed interface, and they were sending everything to the firewalls after that. Dumb.) As this was several decades ago and incessant hacking was still not completely pervasive, they actually had this running for a year and had no breach. They were extremely lucky to say the least. They were wondering why the firewalls never seemed to deny any traffic and wanted a reason. I gave them one. They also quickly hired a dedicated security team of IT clods who understood security.

Same company, a year later. They asked for an audit of the mail server. No clouds yet so pretty much every corporation’s email systems were in house. They had several known and blatant issues that needed immediate attention. I told them in my opinion it is not only amazingly fortunate they were not exposed and exploited, but that within a month it was going to happen. They half-heartedly promised to address the issues which were presented in detail, along with remediation. Simple stuff, too… known vulnerabilities that were correctable with a few updates and check boxes.

Three weeks later on New Year’s Eve, I kid you not… I received a frantic call from this client that their email was down. And sure enough, it was commandeered by a hacker that probably thought it was too good to be true. Not only was email down for three days, but they lost everything and had to rebuild the exchange servers from the ground up. No, they weren’t even keeping backups. The hacker wanted nothing more than a huge platform to spam from, so they again got lucky. And blacklisted. ![]()

So, this shit happens and that’s a possibly reasonable explanation by GB. And someone’s fired.